ETHICS, AI, AND COMPUTING | PERSPECTIVES FROM MIT

Computing is Deeply Human | Stefan Helmreich and Heather Paxson

Social and cultural assumptions shape AI.

Heather Paxson and Stefan Helmreich; (Helmreich photo by Jon Sachs)

“Computing is a human practice that entails judgment and is embedded in politics. Computing is not an external force that has an ‘impact’ on society; instead, society is built right into making, programming, and using computers.”

— Heather Paxson, William R. Kenan, Jr. Professor of Anthropology, and Stefan Helmreich, Elting E. Morison Professor of Anthropology

SERIES: ETHICS, COMPUTING, AND AI | PERSPECTIVES FROM MIT

Stefan Helmreich is the Elting E. Morison Professor of Anthropology and author of the award-winning Alien Ocean: Anthropological Voyages in Microbial Seas, (University of California Press, 2009). His research areas include anthropology of science and technology and the ethnography of information science. His numerous publications include Silicon Second Nature: Culturing Artificial Life in a Digital World (University of California Press, 1998), an ethnography of computer modeling in the life sciences.

Heather Paxson is the William R. Kenan, Jr. Professor of Anthropology, a MacVicar Faculty Fellow, and interim head of the Anthropology Section. Her research centers on how people craft a sense of themselves as moral beings through everyday practices, especially those having to do with family and food. She is the author of Making Modern Mothers: Ethics and Family Planning in Urban Greece (University of California Press, 2004) and The Life of Cheese: Crafting Food and Value in America (University of California Press, 2013).

• • •

Q: What ethical and societal challenges do you see emerging or increasing as computing and AI tools have an accelerating role in shaping human culture?

In 1950, mathematician Alan Turing proposed that computers might “pass” as intelligent if they delivered convincing imitations of human communication. Leading up to the 2016 U.S. presidential election, lively Russian bots and fake Americans alike passed the Turing Test for many Internet users. The online speech acts generated by these entities met cultural expectations not about intelligence, real or artificial, so much as about what might count as a real American.

This story of digital duplicity underscores a vital fact: Computing is a human practice that entails judgment and is embedded in politics. Computing is not an external force that has an “impact” on society; instead, society — institutional structures that organize systems of social norms — is built right into making, programming, and using computers.

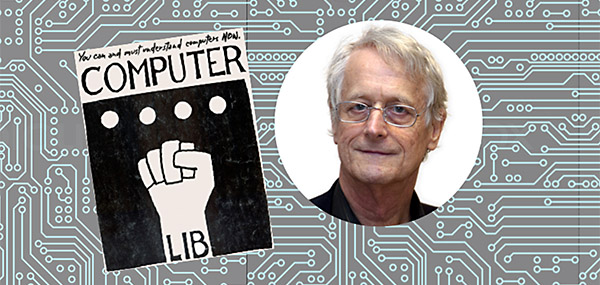

Many computer scientists have made this argument. In 1974, Ted Nelson’s Computer Lib called for computers to be recognized as political objects with many possible histories and futures. Joy Rankin’s A People’s History of Computing reveals personal, networked computing to have emerged not only out of the conjuncture of ARPAnet (the network of the U.S. Advanced Research Projects Agency) with Silicon Valley, but also out of an “interactive commons” that dates back to the 1960s, one that saw teachers, hobbyists, and students — many of whom were women — creating infrastructures for digital action, including in education.

Cover of first edition of Computer Lib, self-published by Ted Nelson in 1974

"At this juncture in history, when xenophobia, hostility to science, and antagonism to health care as a human right are openly on the rise, we need to recognize that the computational is political."

— Stefan Helmreich, Elting E. Morison Professor of Anthropology and Heather Paxson, William R. Kenan, Jr. Professor of Anthropology

A tool for good or ill

But while computer networking was early envisioned as a possible forum for countercultural expression, it did not take long for personal empowerment through computing to be co-opted, first as a marketing strategy and then as a profitable resource for data-mining by social media platforms. As historian Fred Turner recently suggested, the very tools designed by 1960s counterculturalists are today taken up by the anti-establishment alt-right to promote a new authoritarianism that Turner views as “a product of the political vision that helped drive the creation of social media in the first place — a vision that distrusts public ownership and the political process while celebrating engineering as an alternative form of governance.”

“Don’t Be Evil,” Google’s unofficial motto until 2015, was never sufficient as a guide for collective social enterprise. “Ethics” must not be conflated with strictly individual judgments about good or bad. Ethical judgments are built into our information infrastructures themselves. That’s what AI does: It automates judgments — yes, no; right, wrong.

Computer scientist Joy Buolamwini, working in MIT’s Media Lab, for example, tracks how facial recognition algorithms encode racialized — and racist — modes of seeing human faces. While making people of color more visible is vital to U.S. democracy, we should also ask whether, in a white supremacist society, in which inflated rates of incarceration for people of color continue national legacies of marginalization, it is an unmediated good to make people of color more recognizable to algorithms. Neither engineers nor corporate bodies beholden to stockholders are prepared to answer this question alone.

A collective spirit

Today’s headlines might suggest that corporate data-mining has supplanted community-building as the motivating force of commercial computing. But collective spirit still exists. Christopher Kelty examined the early 2000s rise of open source and free software politics across the United States, Europe, and India, learning that the making of such artifacts occurred alongside the making of the communities that believed in them.

Anita Say Chan explored similar dynamics in Peru, while Eden Medina’s Cybernetic Revolutionaries chronicles how 1970s democratic socialists in Chile sought to encode distributive economic management into computational infrastructure (an experiment that was halted by an authoritarian military coup). Countering the generalizations of large-scale abstractions such as “societal benefit” or “human ethics,” anthropologists and cultural historians examine how specific communities grapple with the affordances and hazards of computing.

The politics matter

The specifics always matter — as do the politics. Computing can and should be encouraged in such venues as community organizing, participatory budgeting, and countering gerrymandering (see the work of mathematician Moon Duchin). Lilly Irani, a computer scientist and ethnographer who has critiqued the labor relations emerging from the “clickwork” of “Turkers” for Amazon, seeks to build platforms that offer alternatives to such exploitation.

To approach computing as a cultural endeavor means recognizing that the development and distribution of computing tools is about more than their eventual application. The work of programmers, digital designers, and hackers is motivated by values beyond mere instrumentalism. Computing is a vocation. In making computational tools, coders make themselves into particular kinds of engineers, designers, entrepreneurs, educators, or activists. For example, as incoming MIT Professor of Anthropology Héctor Beltrán has described, hackers in Mexico City navigate multiple commitments and identities as they move between engaging computers as tools for social activism and using them as entrepreneurial outlets.

Computational cultures

The social significance of computing includes understanding it as a form of work from which people seek personal satisfaction as well as livelihoods. Once we recognize that, we can also think about promoting educational and workplace values and environments that enable the creative flourishing of a greater diversity of programmers, who may in turn bring a greater diversity of ideas not only for new products, but for new modes of working.

Toward this end, the MIT Schwartzman College of Computing should integrate into its curricular foundations a requirement to learn about the cross-cultural variety, history, and political philosophy of computing practices — that is, about computational cultures, the subject of a new concentration being developed by the School of Humanities, Arts, and Social Sciences.

Further, the College of Computing should dedicate itself to a robust project of increasing and retaining greater numbers of women and people of color in its professional fields, as well as to making certain that students from a range of socioeconomic backgrounds are able and feel welcome to participate. At this juncture in history, when xenophobia, hostility to environmental science and regulation, and antagonism to health care as a human right are openly on the rise, we badly need a kind of public computing that recognizes, to adapt a phrase, that the computational is political.

MIT can make that recognition one of the pillars of its aspiration to help build a better world.

Suggested links

Series:

Ethics, Computing, and AI | Perspectives from MIT

Stefan Helmreich:

MIT Anthropology

Heather Paxson:

MIT webpage

MIT Anthropology

Stories:

In search of a meaningful life

MIT anthropology course offers contemplation and dialogue on life's big questions.

The subculture of cheese

Anthropologist Heather Paxom studies American cheese-makers.

In Profile: Heather Paxson

Anthropologist examines ‘everyday ethics.’

Stefan Helmreich conducts fieldwork aboard the unique FLIP ship

MIT anthropologist is researching how scientists understand waves.

3 Questions: Stefan Helmreich on wave science

MIT anthropologist of science explores how scientific “things” emerge.

Stefan Helmreich wins Gregory Bateson Book Prize for Alien Ocean

Research examines the world of deep sea marine microbiologists.

Aliens at sea

MIT anthropologist Helmreich studies researchers studying ocean microbes

Ethics, Computing and AI series prepared by MIT SHASS Communications

Office of Dean Melissa Nobles

MIT School of Humanities, Arts, and Social Sciences

Series Editor and Designer: Emily Hiestand, Communication Director

Series Co-Editor: Kathryn O'Neill, Assoc News Manager, SHASS Communications

Published 18 February 2019