THE HUMAN FACTOR SERIES

Interview: David Mindell

on the human dimension of technological innovation

“The new frontier is learning how to design the relationships between people, robots, and infrastructure. We need new sensors, new software, new ways of architecting systems.”

— David Mindell, Frances and David Dibner Professor of the History of Engineering and Manufacturing (STS), and Professor of Aeronautics and Astronautics

About The Human Factor Series

MIT is working to advance solutions to major issues in energy, education, the environment, and health. For example: How can we reduce morbidity and mortality in cancer cases? How can we halve carbon output by 2050? How can we provide quality education to all people who wish to learn? As the editors of the journal Nature have said, framing such questions effectively — incorporating all factors that influence the issue — is a key to generating successful solutions.

Science and technology are essential tools for innovation, and to reap their full potential, we also need to articulate and solve the many aspects of today’s global issues that are rooted in the political, cultural, and economic realities of the human world. With that mission in mind, MIT's School of Humanities, Arts, and Social Sciences has launched The Human Factor — a series of interviews that highlight research on the human dimensions of global challenges. Contributors to this series also share ideas for cultivating the multidisciplinary collaborations needed to solve the major civilizational issues of our time.

David Mindell, Frances and David Dibner Professor of the History of Engineering and Manufacturing (STS) and Professor of Aeronautics and Astronautics, researches the intersections of human behavior, technological innovation, and automation. Mindell is the author of five acclaimed books, most recently Our Robots, Ourselves: Robotics and the Myths of Autonomy (Viking, 2015) as well as the co-founder of Humatics Corporation, which develops technologies for human-centered automation.

______

Q: A major theme in recent political discourse has been the perceived impact of robots and automation on the United States labor economy. In your research into the relationship between human activity and robotics, what insights have you gained that inform the future of human jobs, and the direction of technological innovation?

A: In looking at how people have designed, used, and adopted robotics in extreme environments like the deep ocean, aviation, or space, my most recent work shows how robotics and automation carry with them human assumptions about how work gets done, and how technology alters those assumptions. For example, the US Air Force’s “Predator” drones were originally envisioned as fully autonomous, able to fly without any human assistance; in the end, these drones require hundreds of people to operate.

The new success of robots will depend on how well they situate into human environments; as in chess, the strongest players are often the combinations of human and machine. I increasingly see that the three critical elements are people, robots, and infrastructure — all interdependent.

Q: In your recent book Our Robots, Ourselves, you describe the success of a “human-centered robotics,” and explain why it is the more promising research direction — rather than research that aims for total robotic autonomy. How is your perspective being received by robotic engineers and other technologists, and do you see examples of research projects that are aiming at “human centered robotics.”

A: One still hears researchers describe “full autonomy” as the only way to go; often they overlook the multitude of human intentions built into even the most autonomous systems, and the infrastructure that surrounds them.

My work describes “situated autonomy,” where autonomous systems can be highly functional within human environments such as factories or cities. Autonomy as a means of moving through physical environments has made enormous strides in the past ten years — as a means of moving through human environments, we are only just beginning.

The new frontier is learning how to design the relationships between people, robots, and infrastructure. We need new sensors, new software, new ways of architecting systems.

“When the historian notices patterns, he can begin to ask: is there some fundamental phenomenon here? If it is fundamental, how is it likely to appear in the next generation? Might the dynamics be altered in unexpected ways by human or technical innovations?”

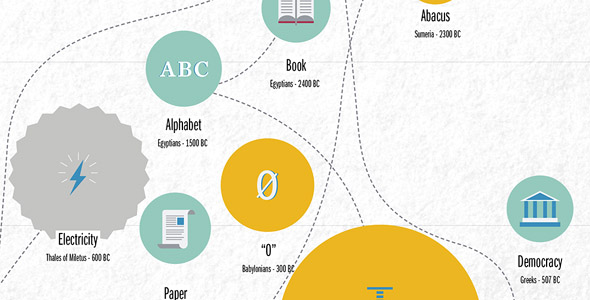

Timeline of historic inventions

Q: What can the study of the history of technology teach us about the future of robotics?

A: The history of technology does not predict the future, but it does offer rich examples of how people build and interact with technology, and how it evolves over time. Some problems just keep coming up over and over again, in new forms in each generation. When the historian notices such patterns, he can begin to ask: is there some fundamental phenomenon here? If it is fundamental, how is it likely to appear in the next generation? Might the dynamics be altered in unexpected ways by human or technical innovations?

One such pattern is how autonomous systems have been rendered less autonomous when they make their way into real world human environments. Like the “Predator” drone, future military robots will likely be linked to human commanders and analysts in some ways as well. Rather than eliding those links, designing them to be as robust and effective as possible is a worthy focus for researchers’ attention.

Q: President Reif has said that the solutions to today’s challenges depend on marrying advanced technical and scientific capabilities with a deep understanding of the world’s political, cultural, and economic realities. What barriers do you see to multi-disciplinary, sociotechnical collaborations, and how can we overcome them?

A: I fear that as our technical education and research continues to excel, we are building human perspectives into technologies in ways not visible to our students.

All data, for example, is socially inflected, and we are building systems that learn from those data and act in the world. As a colleague from Stanford recently observed, go to Google image search and type in “Grandma” and you’ll see the social bias that can leak into data sets — the top results all appear white and middle class.

Now think of those data sets as bases of decision making for vehicles like cars or trucks, and we become aware of the social and political dimensions that we need to build into systems to serve human needs. For example, should driverless cars adjust their expectations for pedestrian behavior according to the neighborhoods they’re in?

Meanwhile too much of the humanities has developed islands of specialized discourse that is inaccessible to outsiders. I used to be more optimistic about multi-disciplinary collaborations to address these problems. Departments and schools are great for organizing undergraduate majors and graduate education, but the old “two cultures” divides remain deeply embedded in the daily practices of how we do our work. I’ve long believed MIT needs a new school to address these synthetic, far reaching questions and train students to think in entirely new ways.

Suggested reading

MIT Program in Science, Technology, and Society

MIT News Archive: Robots and us

MIT News Archive: IAA honors David Mindell for "Digital Apollo"

Interview prepared by MIT SHASS Communications

Editorial team: Emily Hiestand (series editor) and Daniel Pritchard

Photograph: courtesy of David Mindell